Automated Assembly Monitoring with Synthetic Data

Manual assembly tasks often involve errors like skipped steps, incorrect sequences, or inaccurate part placement. These mistakes can cause product failures, lower quality, and increased costs. Computer vision can help automate step recognition for quality control, but real-world data collection and labeling are expensive. Using synthetic data offers a cost-effective alternative.

Method

Recent advances in computer vision enable the generation of assembly motions and the use of synthetic data to train inspection models, improving the generalization and adaptability of automated assembly monitoring. Building on this, we propose a pipeline consisting of three key modules: Physics-based assembly motion generation, Photorealistic assembly rendering, and Inspection model training, as illustrated below.

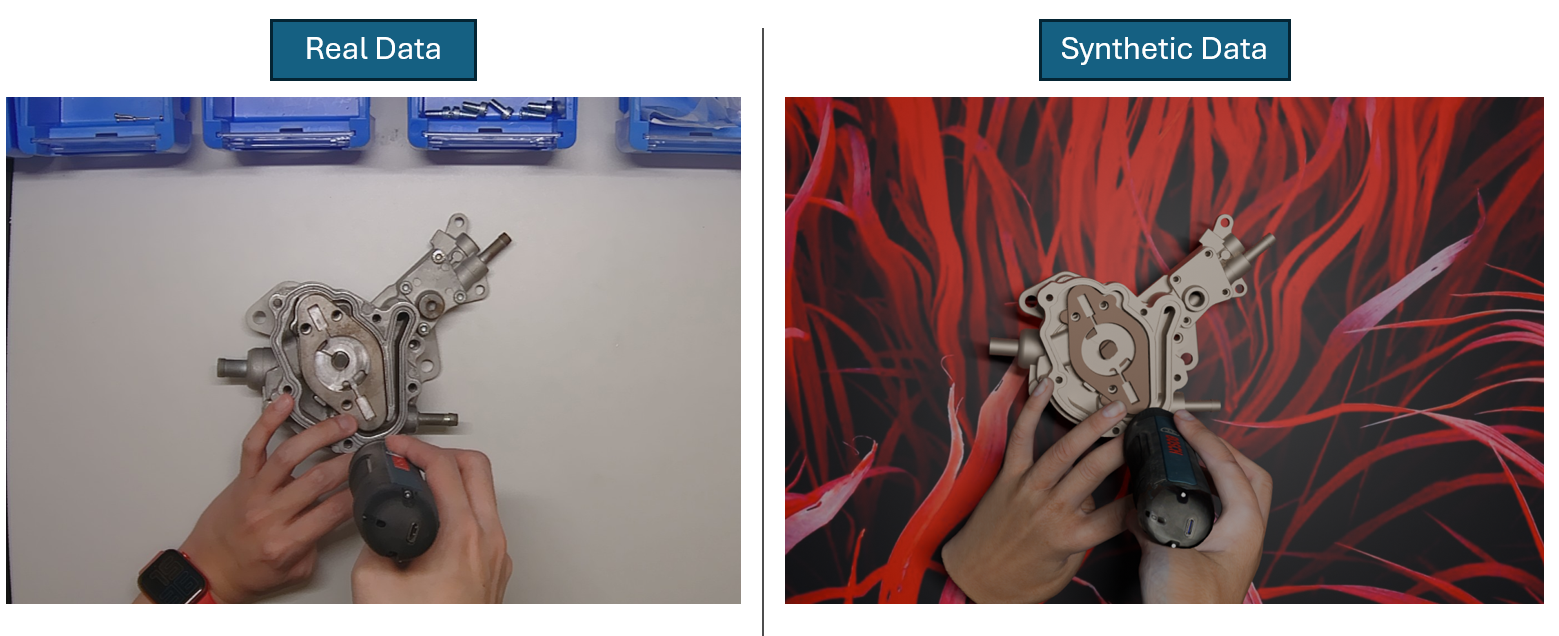

Specifically, the pipeline takes as input the CAD files of the product and tools along with their material properties, as well as the assembly sequence with the components assigned to each step. The assembly motion generation module, adapted from [1], simulates diverse assembly motions while introducing random initial states, grasping poses, and moving velocities to reflect human variability. Next, the rendering module generates high-fidelity RGB assembly sequences with realistic component and tool materials while incorporating random lightening, backgrounds and occlusions to simulate real-world disturbances. Finally, the inspection model training module leverages the synthetic data to train computer vision models for automated quality inspection.

Results

Depending on the assembly task, the method achieves a very high success rate in detecting completed assembly steps. The method can be adapted to new cases with less than two hours of manual effort.

These results highlight the effectiveness of leveraging synthetic data for automated assembly monitoring. Furthermore, the approach holds promise for handling more complex scenarios through enhanced hand-object interaction understanding—including fully occluded actions (e.g., tightening a screw with a manual screwdriver) and tasks with minimal visual changes (e.g., applying grease with fingers).

Publications

[1] GraspXL: Generating Grasping Motions for Diverse Objects at Scale

For more information please contact Hui Zhang or Julian Ferchow.